Operational Practices for AI-Native Teams

The Frontier Pod: humans and AI agents collaborating around a shared system of intent, validation, and learning.

Navigating Engineering in the Age of AI

For most of the history of software development, the hardest part of building systems was turning ideas into code.

Engineers translated requirements into implementation. Architecture mattered, design mattered, but the real constraint was always the same.

Writing and maintaining the software itself.

AI is changing that equation.

Modern coding agents can now generate large portions of working systems. They produce functions, tests, refactors, and pull requests. The cost of implementation is collapsing.

But the cost of being wrong has not changed.

As build cost approaches zero, the bottleneck moves.

The challenge is no longer producing code.

The challenge is deciding what should exist, why it matters, and whether the result actually works.

Software delivery begins to shift from production to calibration.

Organizations that want to operate effectively in this environment must rethink how they develop talent and structure teams.

The practices that evolved when developers were the bottleneck do not translate well into an AI-native environment.

A different set of operational practices begins to emerge.

But those practices depend on a new set of engineering capabilities.

The Five Frontier Skills in AI-Native Engineering

Operating effectively with AI is not primarily about prompting.

It is about learning how to structure work so that human judgment and AI capability reinforce each other.

Five capabilities increasingly define strong practitioners in AI-native engineering environments.

1.Boundary Sensing

Boundary sensing is the ability to understand what AI can reliably do today.

Engineers constantly decide where work should be delegated and where human control must remain.

Poor boundary sensing appears in two common forms.

Some engineers continue doing work manually that agents already perform perfectly.

Others delegate work that agents consistently fail at.

Strong practitioners develop intuition for where the boundary currently sits.

Agents can reliably handle many implementation tasks today:

- CRUD endpoints

- Test scaffolding

- Refactoring

- Documentation

- Bug reproduction

Humans still maintain control over areas where architectural judgment and risk dominate:

- System architecture

- Concurrency models

- Data integrity constraints

- Security design

- Failure recovery

The boundary moves quickly.

Developing good boundary sensing becomes a continuous discipline.

2.Seam Design

Once boundaries are understood, the next skill is designing the seams where humans and AI alternate.

This is essentially workflow architecture.

Instead of a developer performing every step of delivery, work flows through phases where agents and humans contribute at different points.

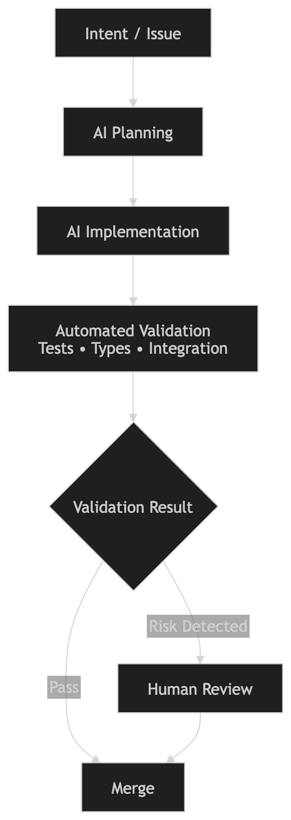

A typical agentic pipeline might look like:

The workflow is not a traditional development pipeline. It is a validation system where automated checks gate most work and humans intervene only when risk signals appear.

The seams become control points where validation occurs.

Typical seams include:

- Issue specification

- Test results

- PR diffs

- Architectural review

- Deployment signals

The architecture of the workflow becomes as important as the architecture of the software.

3.Failure Model Maintenance

AI systems fail in patterns.

Understanding those patterns is essential.

Common coding failures include:

- Edge cases ignored

- Concurrency bugs

- Incorrect assumptions about data shape

- Partial implementations

- Hallucinated APIs

Verification strategies must target these failure modes.

Instead of reviewing every line of code, engineers focus validation where risk is highest:

- Integration boundaries

- Schema changes

- Error handling

The failure model drives the verification strategy.

4.Capability Forecasting

Another emerging skill is understanding where automation is heading.

Several patterns are already visible in software engineering.

Tasks rapidly approaching full automation include:

- CRUD code

- Unit tests

- Documentation

- Large-scale refactoring

- Bug fixes

Meanwhile other areas remain strongly human-centered:

- Product definition

- Architecture tradeoffs

- Organizational alignment

- Safety decisions

- Risk management

The highest leverage skills shift upstream and downstream of implementation.

Engineers gain leverage by learning to:

- Design systems

- Orchestrate agents

- Build evaluation pipelines

5.Leverage Calibration

AI systems produce far more output than humans can inspect.

Supervision models must adapt.

If an agentic system generates 100 pull requests per day, a realistic review strategy might look like:

- 90 percent automatically validated

- 8 percent sampled

- 2 percent deep inspection

This resembles reliability engineering and observability practices in distributed systems.

Engineering becomes supervisory control.

Humans guide direction, monitor signals, and intervene when risk appears.

Operational Practices That Follow

Once teams develop these capabilities, several operational practices naturally emerge.

Practice environments instead of coursework.

Measure calibration instead of memorized knowledge.

Maximize feedback density instead of training hours.

Create frontier operators who explore and codify new workflows.

The Emergence of AI-Native Team Structures

Once organizations begin operating with AI in the delivery loop, team structures start to change.

Traditional engineering organizations were designed around a single constraint: software implementation was slow and expensive.

Large teams existed to move work through layers of specialization.

Product defined requirements.

Engineering implemented them.

QA validated them.

Operations deployed and monitored the result.

Each stage existed because implementation itself was the bottleneck.

AI changes that constraint.

When agents can generate large portions of working systems, the bottleneck moves away from production and toward judgment, experimentation, and validation.

Teams no longer scale primarily through additional developers. They scale through leverage.

As a result, new team shapes begin to emerge.

Two patterns appear repeatedly in organizations learning to operate effectively with AI.

The Solo Operator

In some environments a single highly skilled practitioner orchestrates a network of AI agents across multiple domains.

Instead of writing most of the implementation themselves, the operator directs a collection of specialized systems that perform large portions of the work.

The human provides:

- Problem framing

- Architectural direction

- Risk evaluation

- Validation of outputs

Agents perform:

- Implementation

- Refactoring

- Test generation

- Documentation

- Bug reproduction

- Incremental improvements

The human becomes less of a manual implementer and more of a systems operator guiding generative tools.

This model works best in environments with:

- Greenfield systems

- Short feedback loops

- Clear architectural patterns

- High individual capability

Under these conditions one operator working with AI systems can often accomplish what previously required five to ten engineers.

The result is not simply higher productivity.

It is a fundamentally different operating model.

The Frontier Pod

Most meaningful software systems require more than one perspective.

As complexity increases, organizations tend to converge on a second structure.

The Frontier Pod.

A Frontier Pod is a small, highly leveraged team of up to five people centered around a practitioner who operates at the boundary of AI capability.

This frontier operator designs and maintains the system that connects humans and agents.

Their responsibilities include:

- Designing the workflow between humans and AI

- Maintaining evaluation and verification pipelines

- Updating failure models as systems evolve

- Recalibrating where work should be automated

Frontier operators are often tasked with exploring the vision of executive leadership or helping shape that vision themselves.

Their role is to translate strategic direction into operational systems that teams and AI agents can execute.

They do not primarily produce code.

They design and maintain the system that produces code.

Around this role sits a compact group of specialists of up to 4 additional people, each heavily amplified by AI systems.

Engineers work alongside implementation agents, shaping architecture, directing agents, and maintaining strong craft guardrails such as testing, CI pipelines, and validation frameworks.

Designers run rapid product experiments, generating variations and integrating design directly into development workflows.

Data scientists and analysts build evaluation pipelines that measure system behavior and produce learning signals that guide future iterations.

The structure resembles a surgical team.

One person maintains awareness of the entire system while specialists contribute focused expertise within tightly coordinated workflows.

Small Teams, High Leverage

These teams remain small but extremely leveraged. Often producing the work of teams 5x their size.

AI agents handle large portions of implementation, experimentation, and analysis. As a result a compact pod can often deliver the volume of work that previously required twenty or more engineers.

The change is not only productivity.

The structure of work itself changes.

Instead of large teams coordinating through layers of handoffs, a small group operates inside tight feedback loops.

- Idea

- Implementation

- Validation

- Learning

Each cycle becomes shorter.

Teams spend less time coordinating work and more time learning from outcomes.

In effect the team becomes a learning system rather than a production pipeline.

The Smallest Viable AI-Native Product Unit

When implementation becomes cheap, the core work of delivery changes.

Success depends less on how quickly software can be written and more on how quickly organizations can learn.

The critical activities become:

- Framing the right problems

- Structuring experiments

- Validating outcomes

- Learning from results

This is the operating model behind Vibe-to-Value.

Product direction becomes a hypothesis about value. Autonomous systems generate implementations rapidly, but strong evaluation systems and guardrails determine what survives.

Human judgment guides the process while agents accelerate execution.

In this environment the Frontier Pod becomes the smallest viable operating unit.

A small group running dense feedback loops alongside powerful AI systems.

Organizations that learn to operate this way gain a structural advantage.

Because when implementation becomes cheap, the real advantage comes from learning faster than everyone else.

Everything becomes an experiment.